In this post we will show you the different ways you can compose a Request Body: from the simplest to the most complex.

Copy and paste the body from somewhere

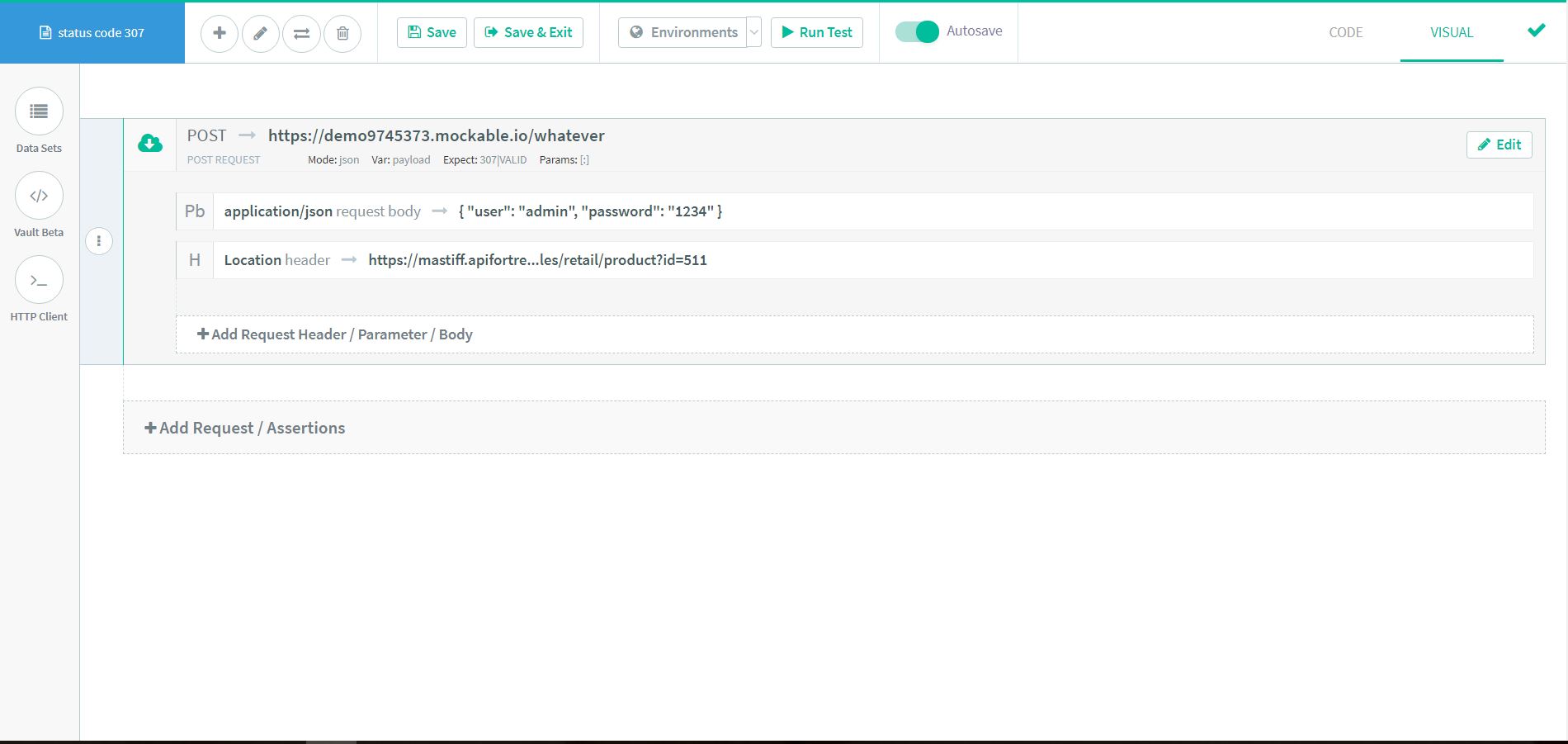

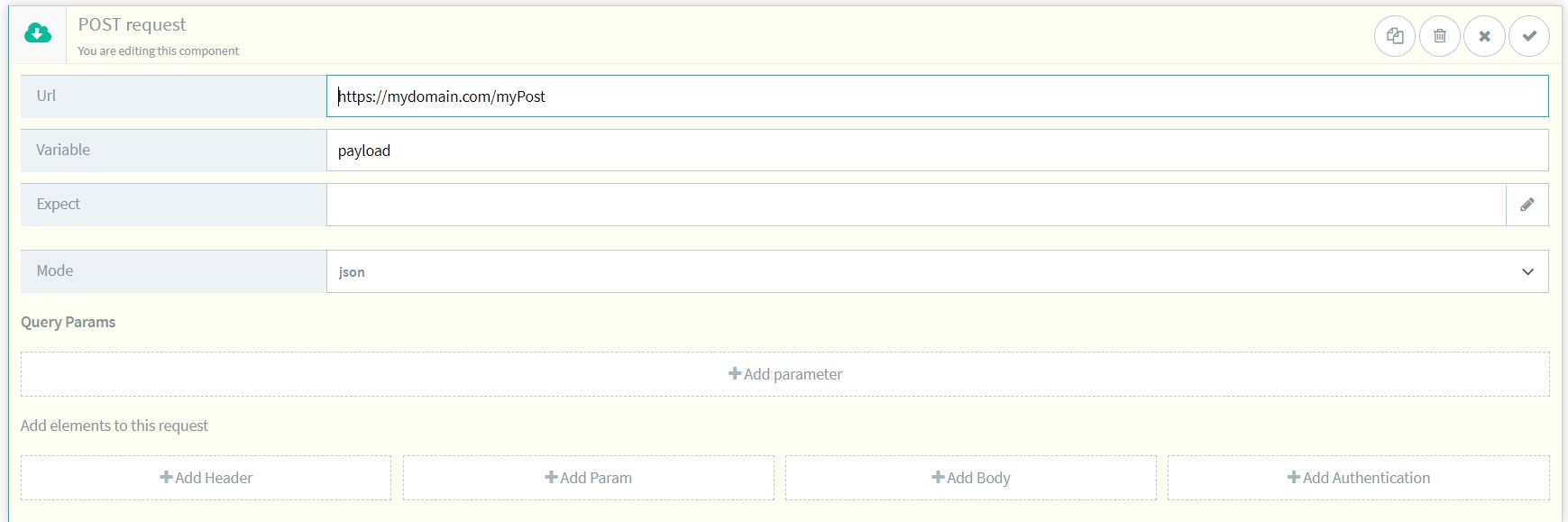

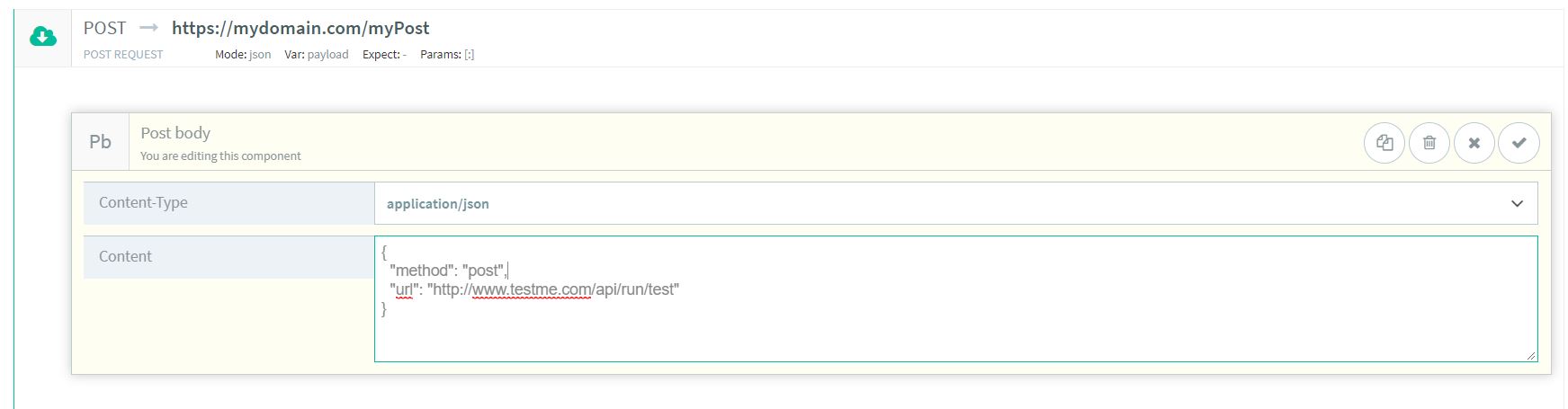

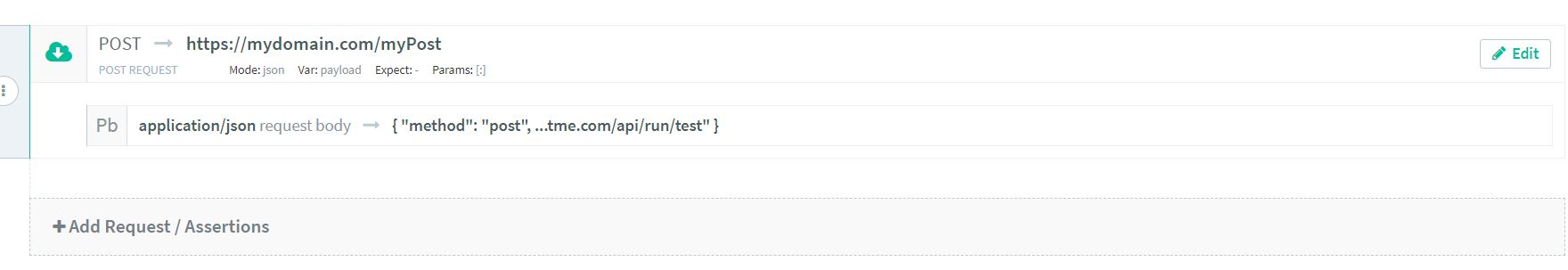

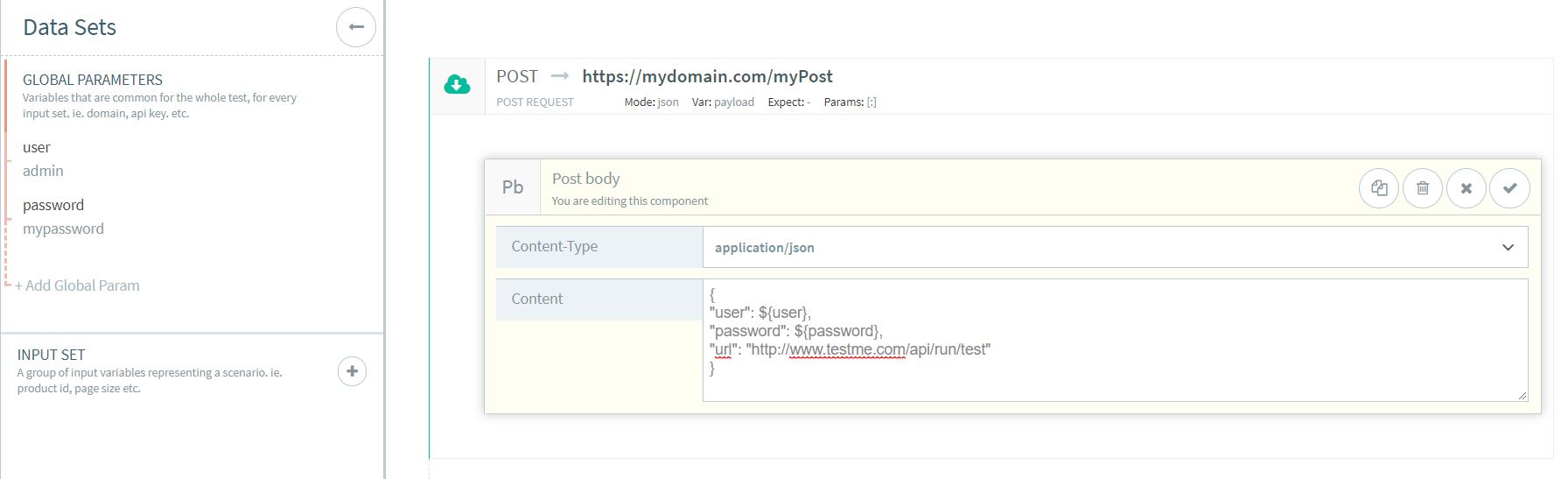

The first and easiest way is when we have a body from somewhere to copy and paste as is into the call. Let’s see how this is done:- In the composer we add the POST component and type the url and all of the required fields.

Url: https://mydomain.com/myPost (the url of the resource you want to test) Variable: payload (the name of the variable that contains the response) Mode: json (the type of the response)

- Now we add the Body component and after selecting the Content-Type we paste the body in Content field.

Content-Type: application/json Content: {"method":"post","url":"http://www.testme.com/api/run/test"} (the body required in your call)

- Now we can execute the call and proceed with the test.

Using Variables in the Request Body

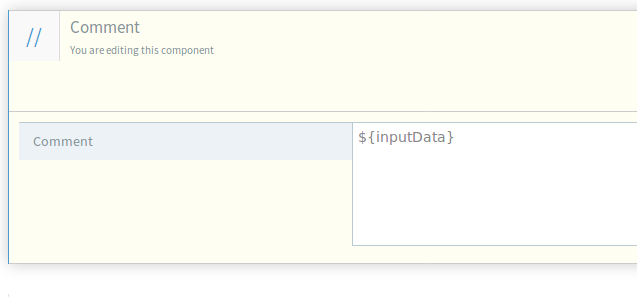

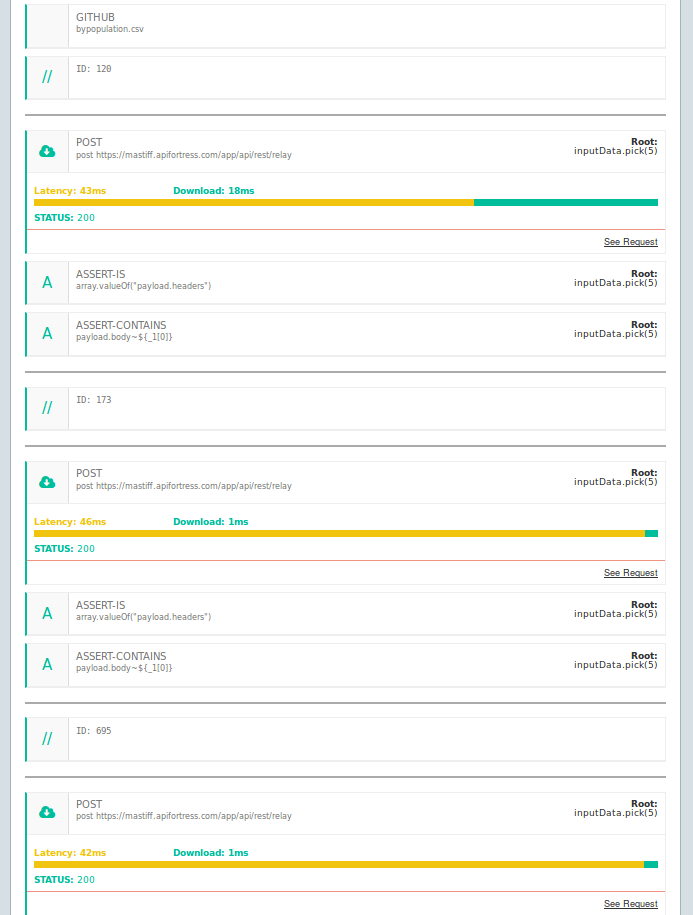

Another way to compose a request is using variables in the body.

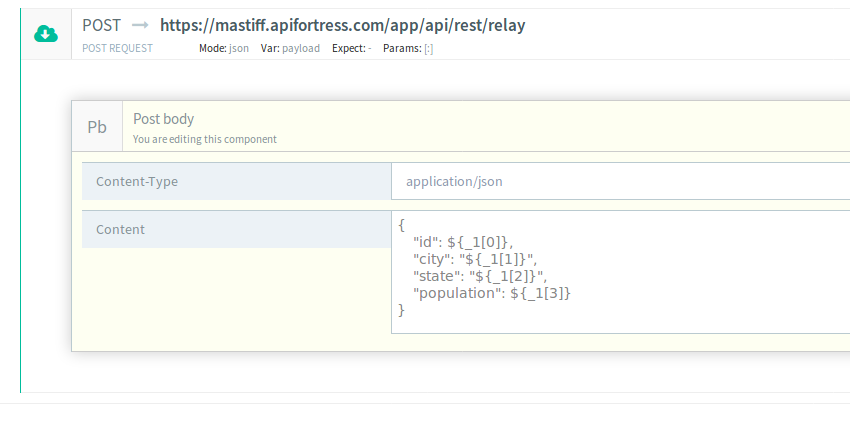

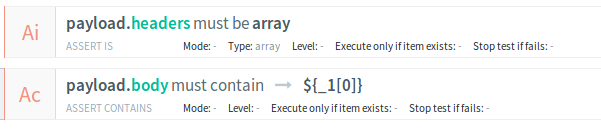

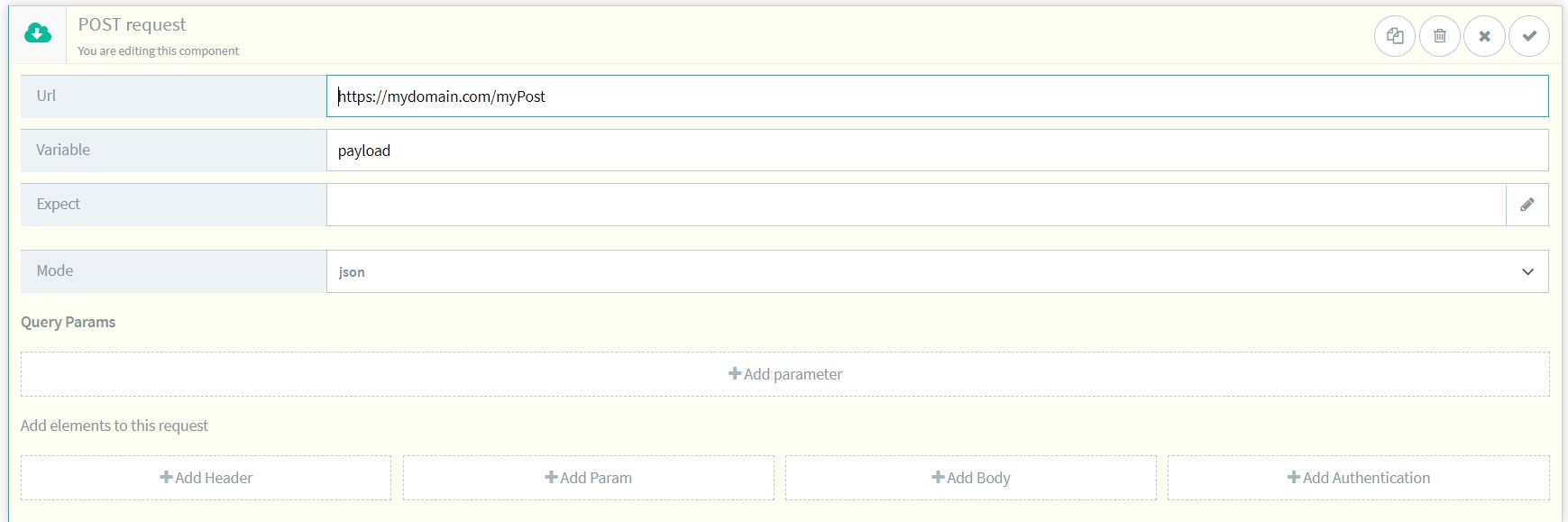

- In the composer we add the POST component typing the url and all the required fields.

Url: https://mydomain.com/myPost (the url of the resource you want to test) Variable: payload (the name of the variable that contains the response) Mode: json (the type of the response)

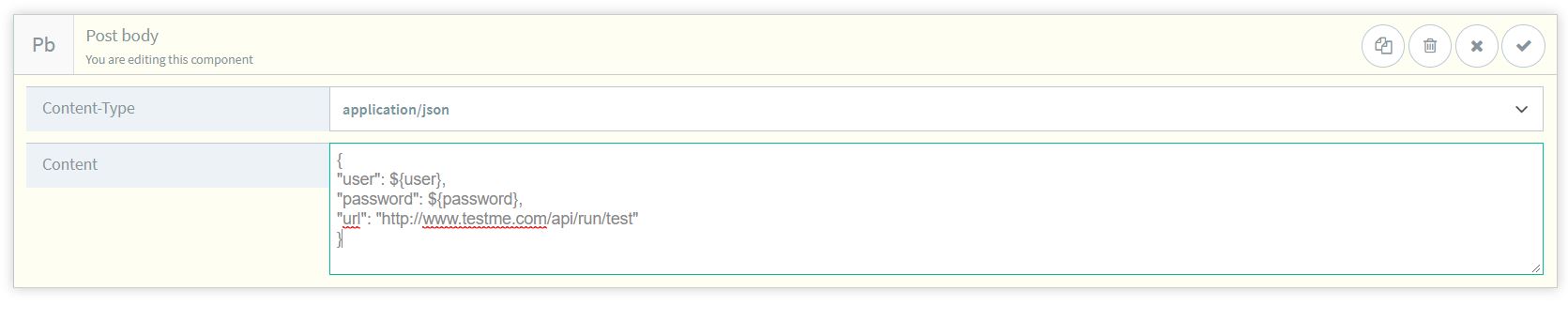

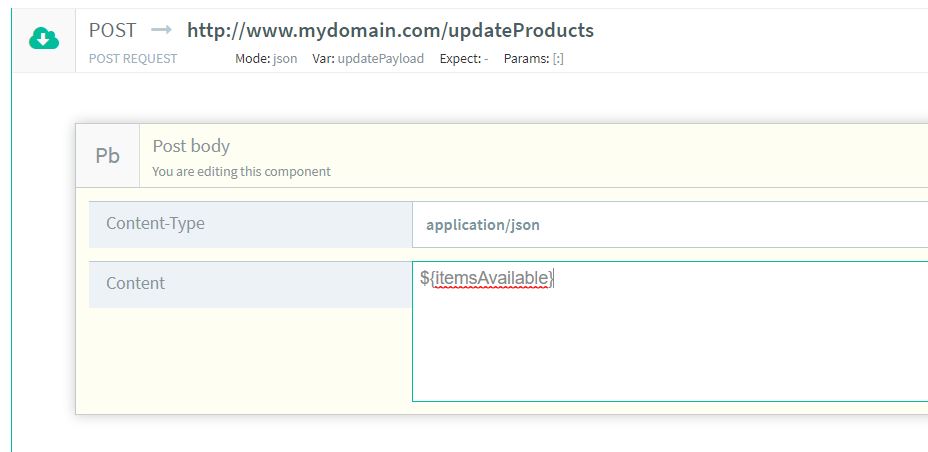

- We add the Body component. In the Content-Type we choose the proper one, let’s say application/json. In this scenario we need to use a variable so in the Content field we enter the following:

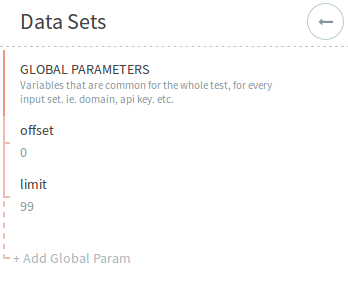

{ "user": ${user}, "password": ${password}, "url": "http://www.testme.com/api/run/test" }In this scenario “user” and “password” are not directly passed in the body but they are variables defined as global parameters in the data set.

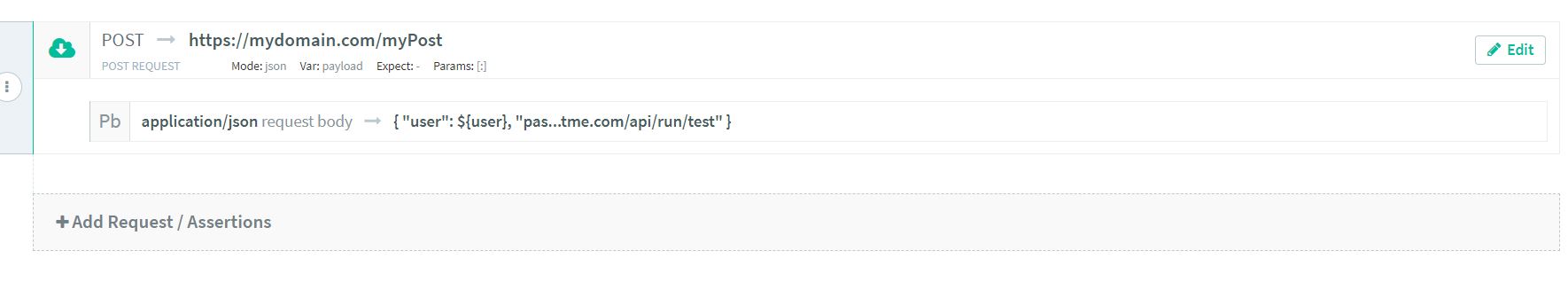

- The POST has been done and can be executed.

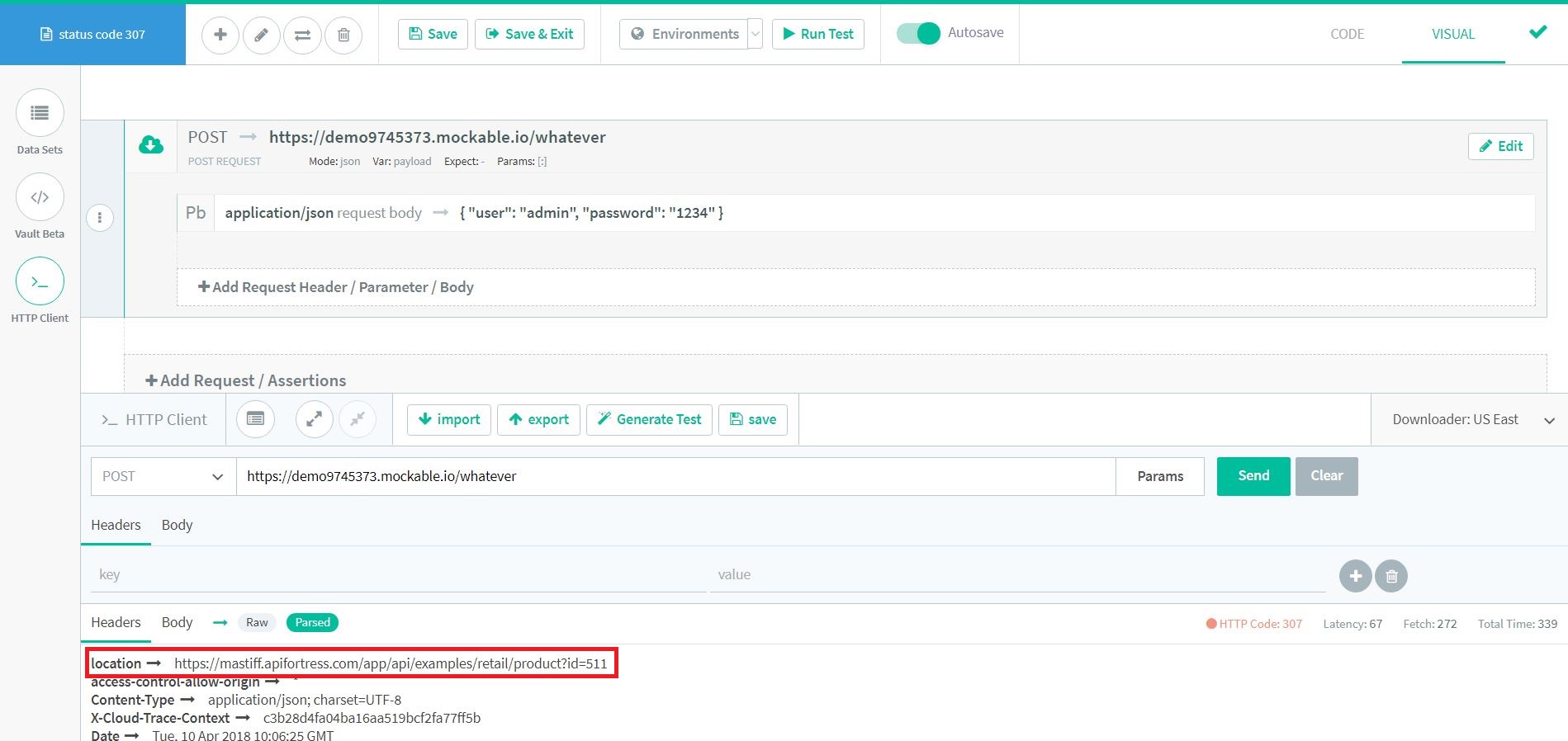

Using a Variable from another call

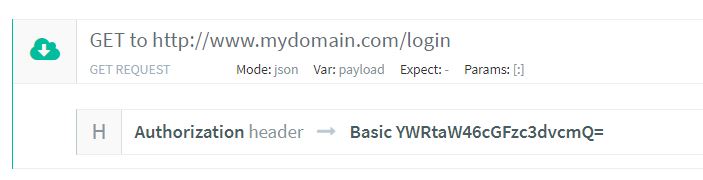

The next way to compose a Request Body is by using a variable from another call. Let’s see how this can be done.- The first thing we need to do is add the call we will retrieve the variable from. Let’s consider, as example, the common scenario where we need to perform a login for authentication and retrieve the authentication token required for the following call.

Url: http://www.mydomain.com/login (the url of the resource you want to test) Variable: payload (the name of the variable that contains the response) Mode: json (the type of the response) Header: Name: Authorization Value: Basic YWRtaW46cGFzc3dvcmQ= (this value comes from encoding username:password in Base64)

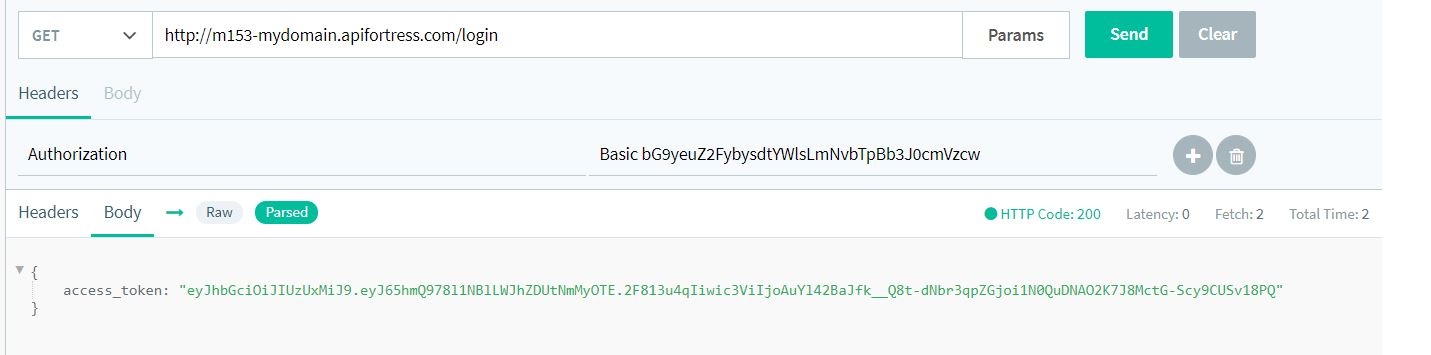

- Executing the login we will have as response the desired token. Let’s see it using our console.

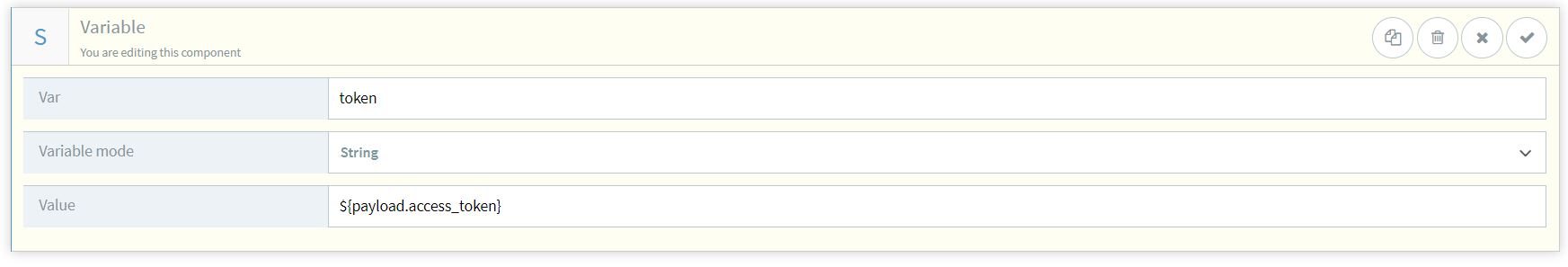

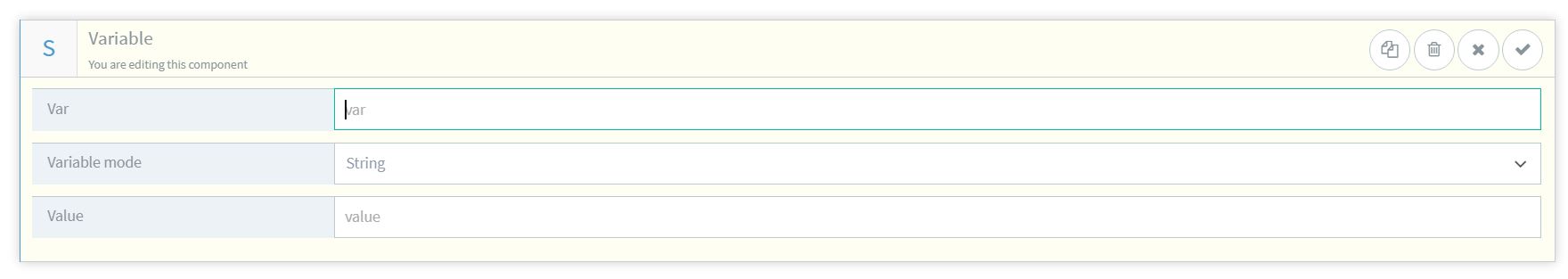

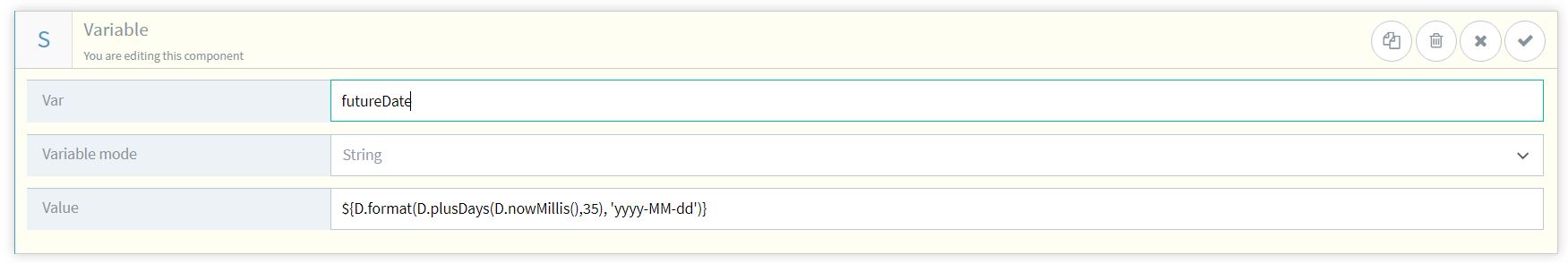

- Now we need to save the token as variable.

Var: token (the name of the variable) Variable mode: String (the type of the variable) Value: ${payload.access_token} (we retrive the value from the previous 'payload')

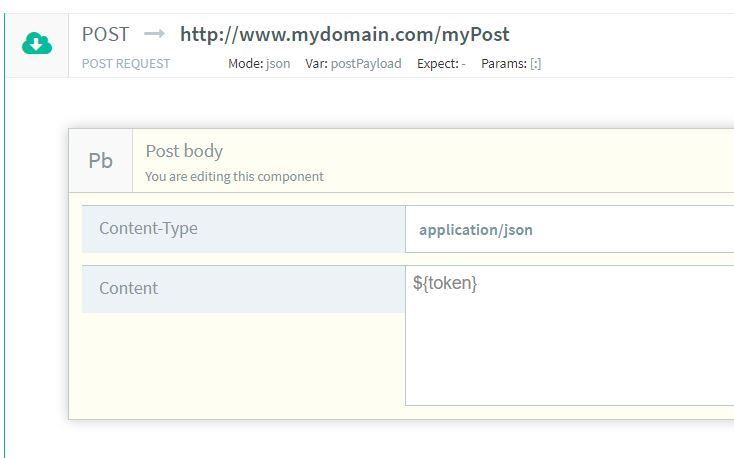

- Once the token has been saved as variable we can proceed adding the second call and use that token in the Request Body.

Content-Type: application/json Content: {"token":"${token}"}

- In the composer we add the POST component typing the url and all the required fields.

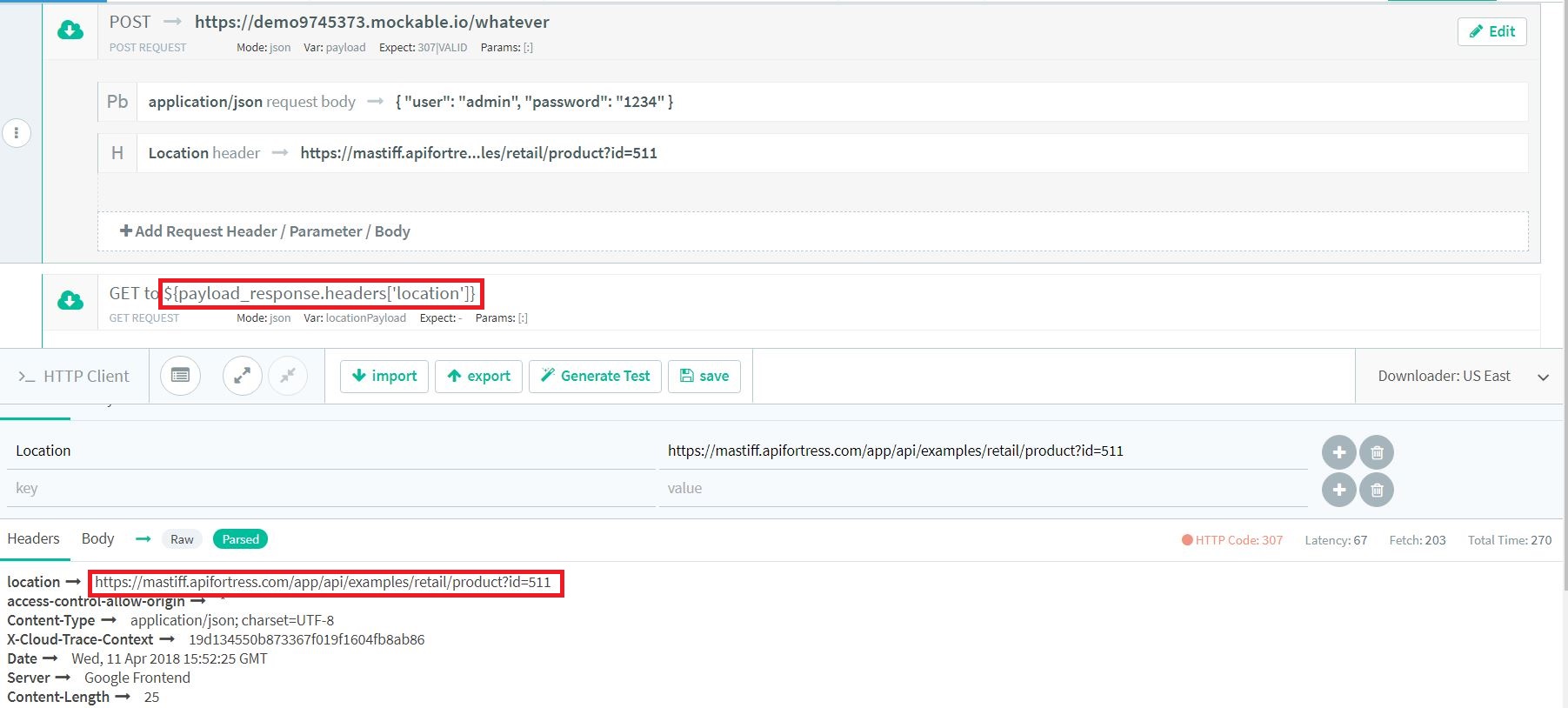

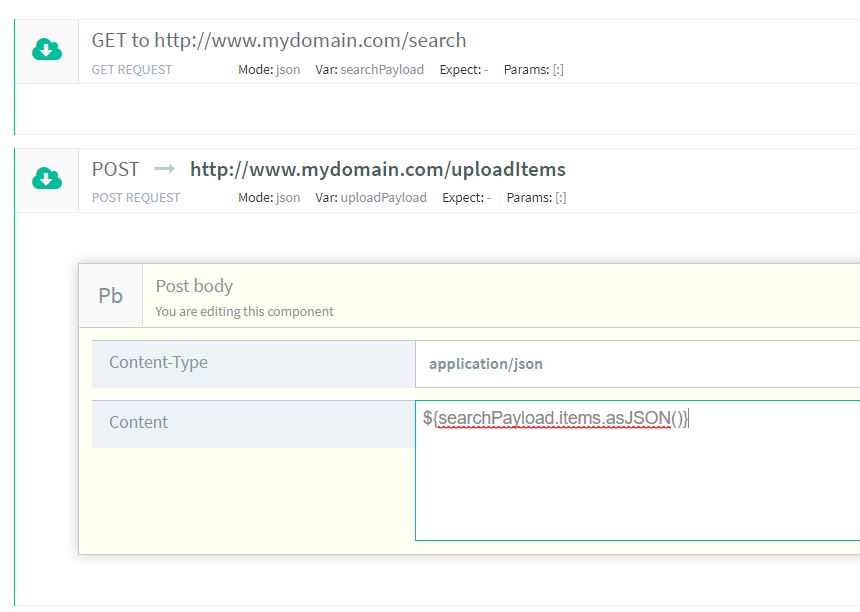

Using an object from another call

- First, we perform the call we retrieve the object from.

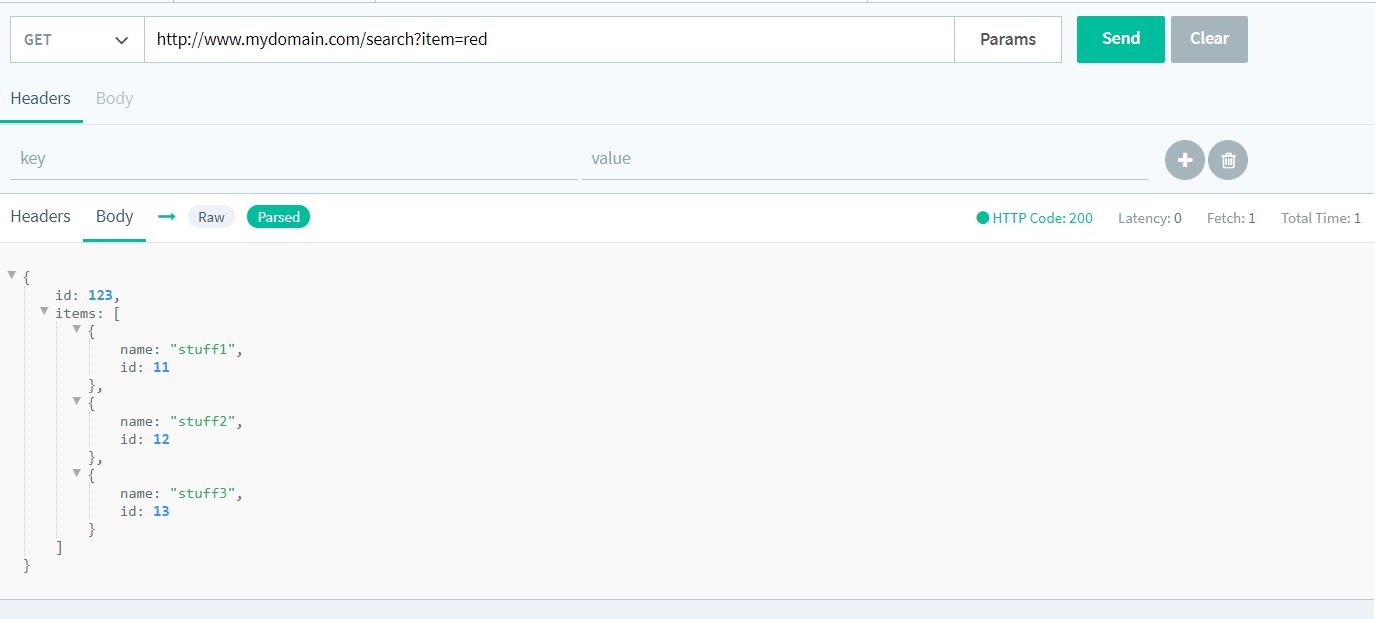

- Let’s execute the call in our console in order to see the response.

{ "id": 123, "items": [ { "id": 11, "name": "stuff1" }, { "id": 12, "name": "stuff2" }, { "id": 13, "name": "stuff3" } ] }

- Let’s say we need the object ‘items’ as the body in the subsequent call. So, as a second call, we will add a POST and we will type the following as body:

${searchPayload.items.asJSON()}

- Now we can proceed with the test.

-

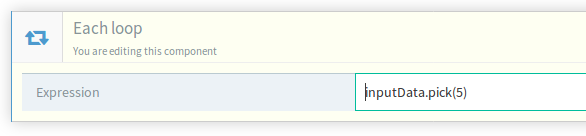

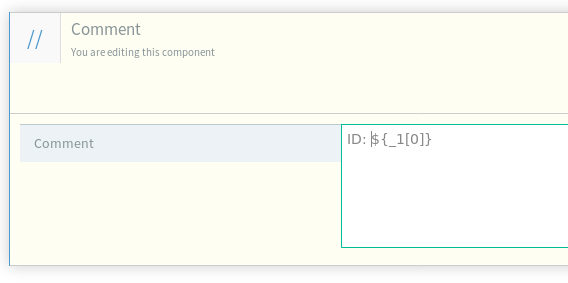

In the next example we will show you a more complex case. We will consider the scenario where we need to use an object retrieved from a previous call into the body of a subsequent call. Let’s take a look at an example:

Creating a new structure to add as a body

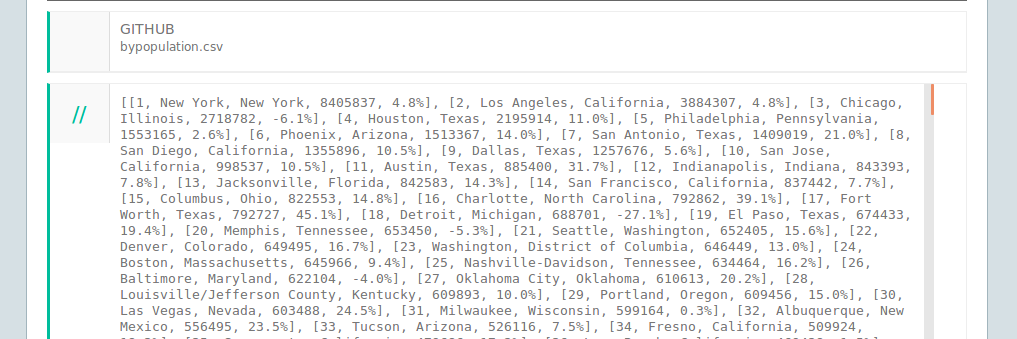

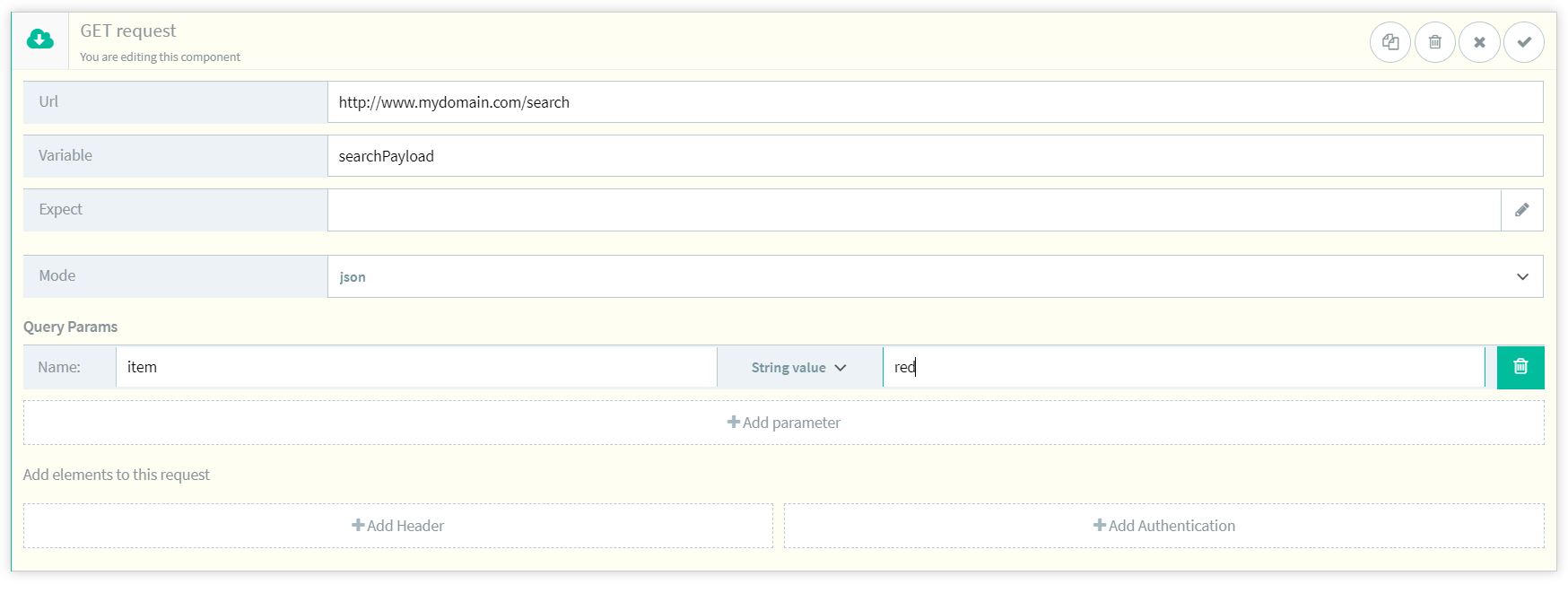

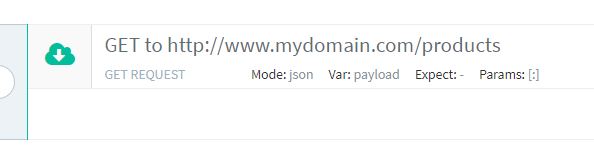

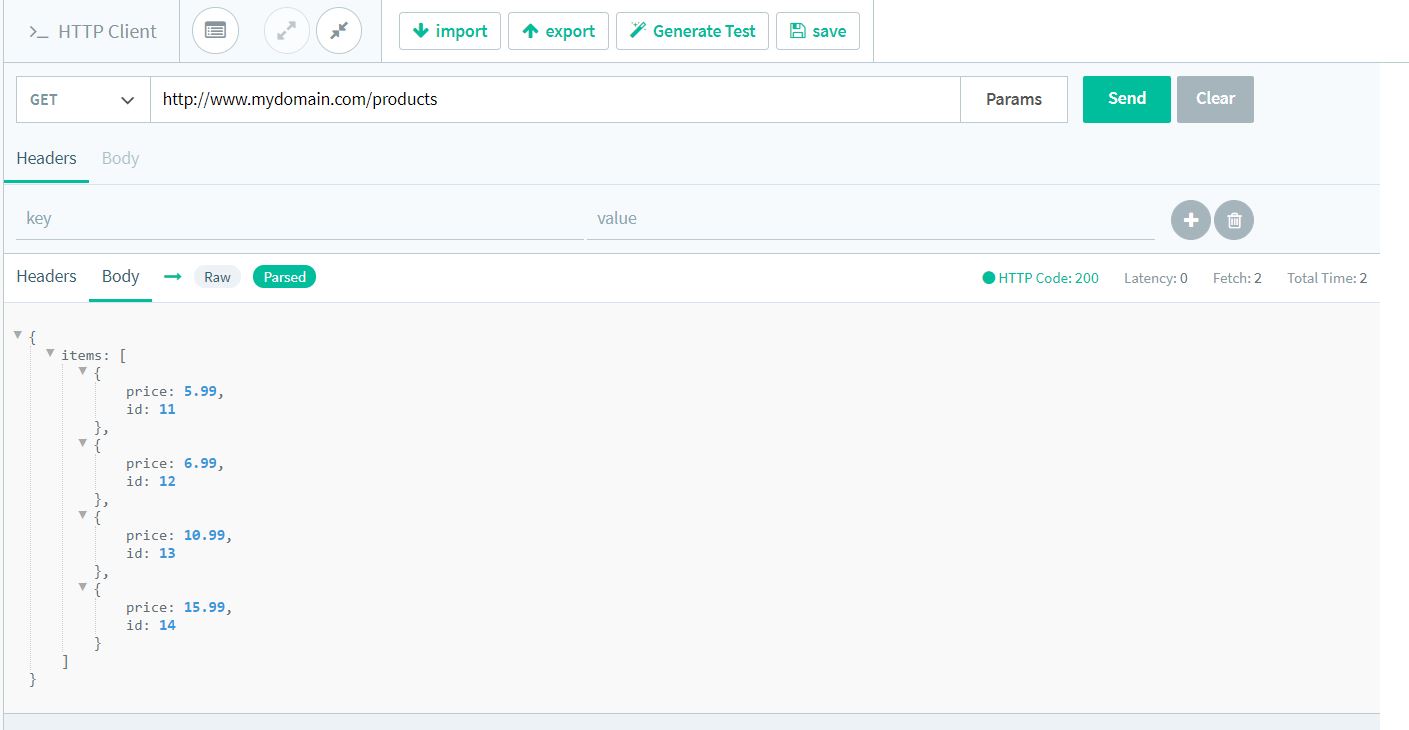

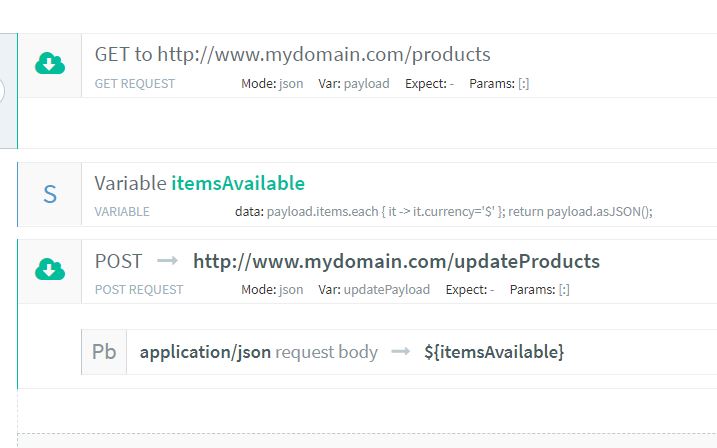

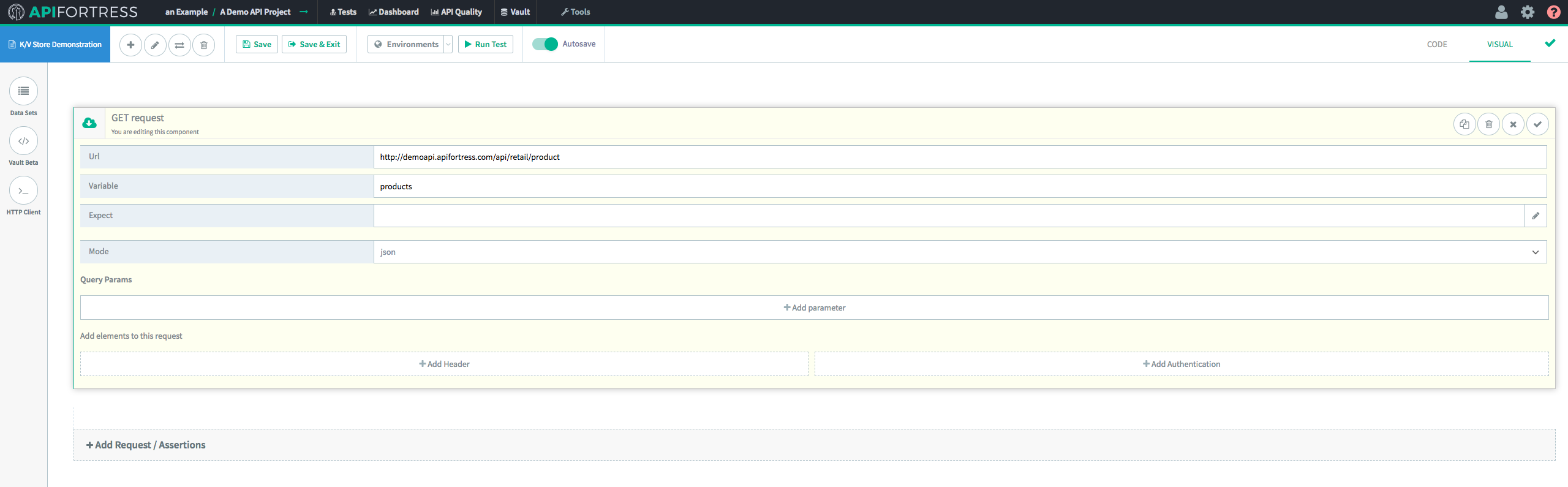

- The first thing we have to do is to perform the call which retrieves the data we’re using. Let’s consider a GET that returns an array of items.

- Let’s see the response using our console.

{ "items": [ { "id": 11, "price": 5.99 }, { "id": 12, "price": 6.99 }, { "id": 13, "price": 10.99 }, { "id": 14, "price": 15.99 } ] }

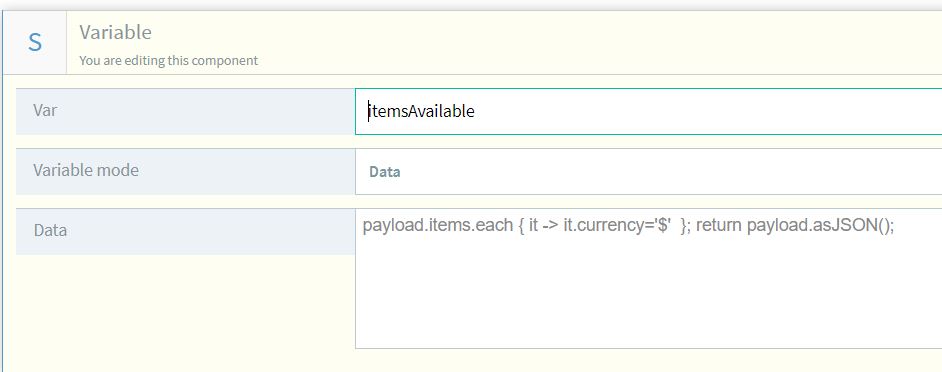

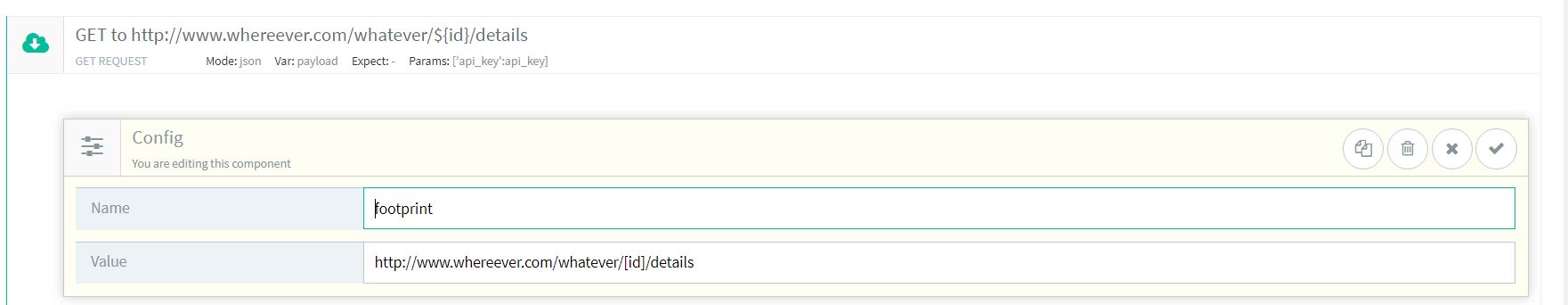

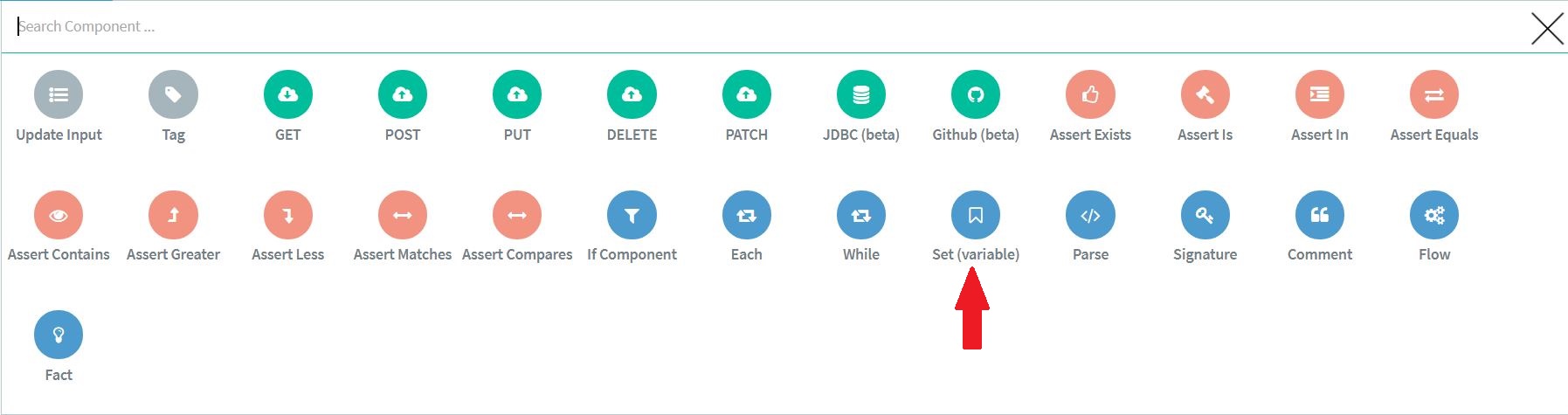

- Now we need to create the new data structure. To do so, we add a SET component as follow:

payload.items.each { it -> it.currency='$' }; return payload.asJSON(); (for each item in the array, we add the currency attribute with "$" as value)

- Now we can add the POST and add the new structure as the POST request body:

- That’s it. Now we can proceed with the test.

-

The last scenario is yet another more complex one. In this case, we consider the scenario where we need to create a new structure to add as a body, using data from a previous call. Let’s see how we can do this:

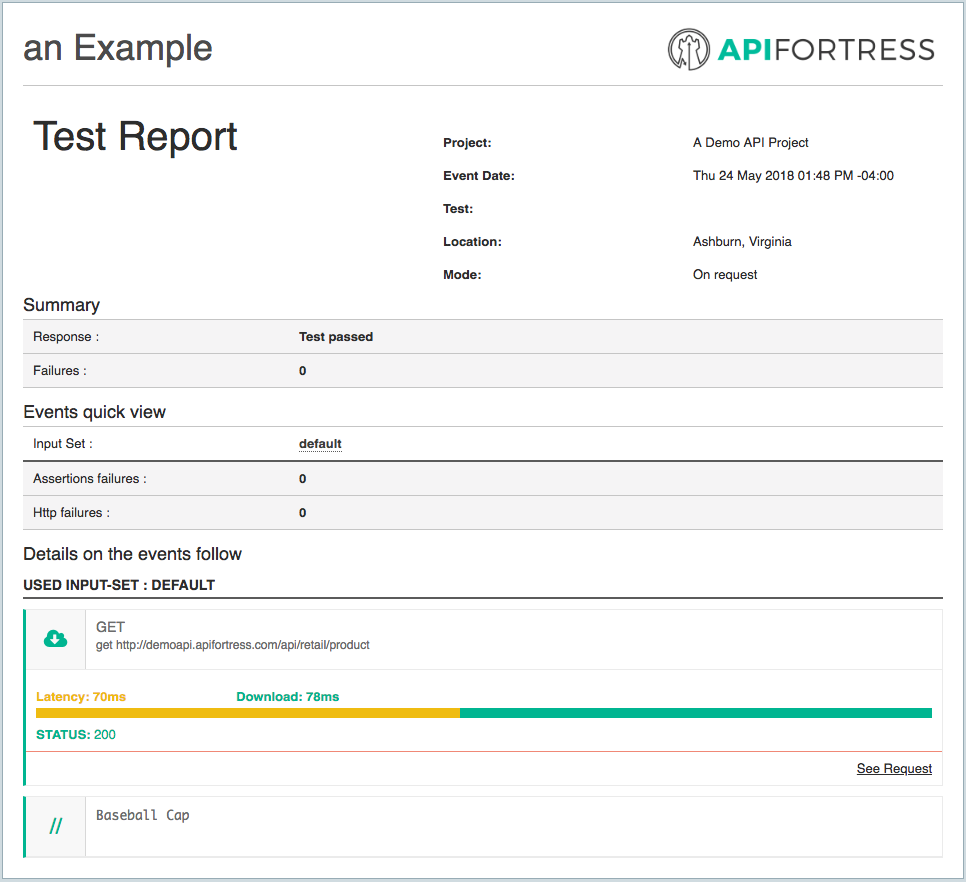

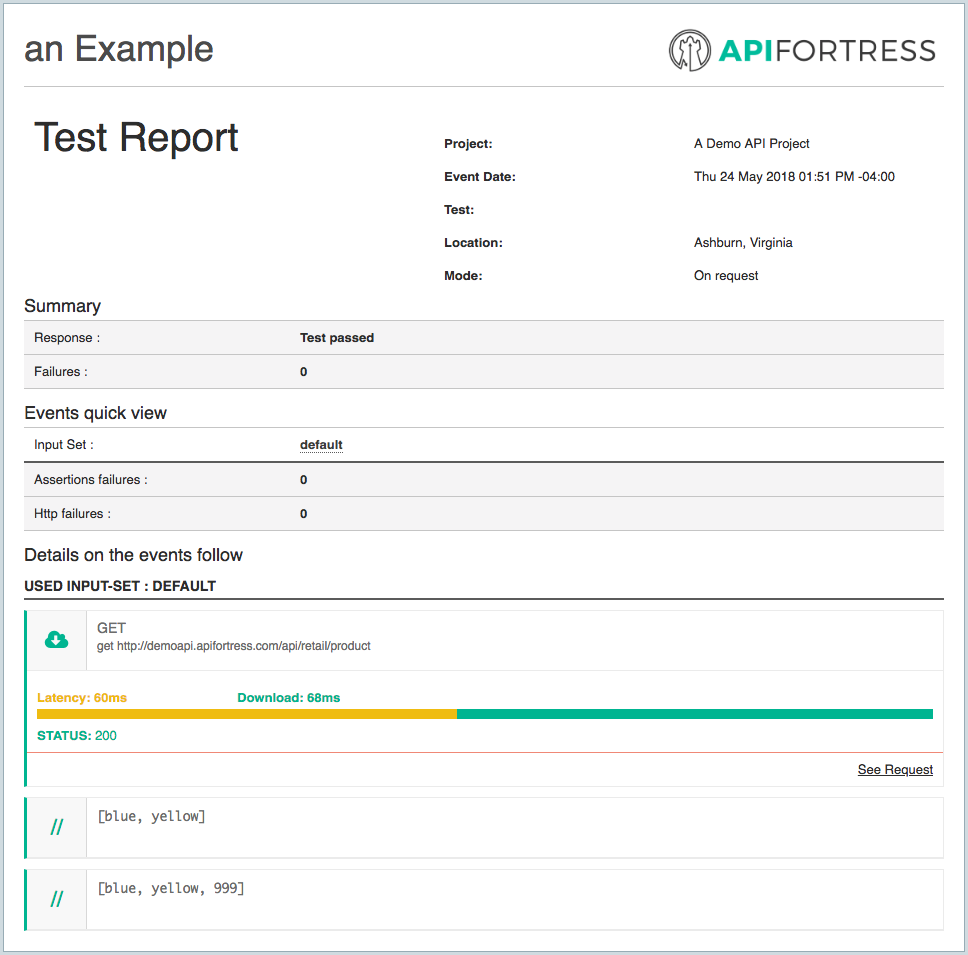

An extremely important point to note is that these key/value pairs are temporary. They expire after 24 hours has elapsed since the last update to the value itself.

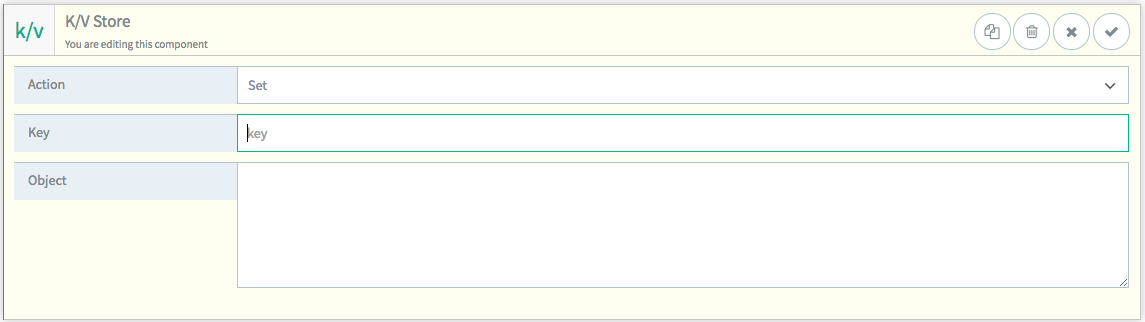

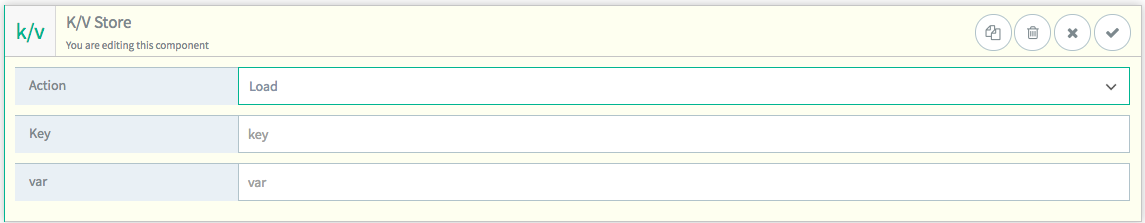

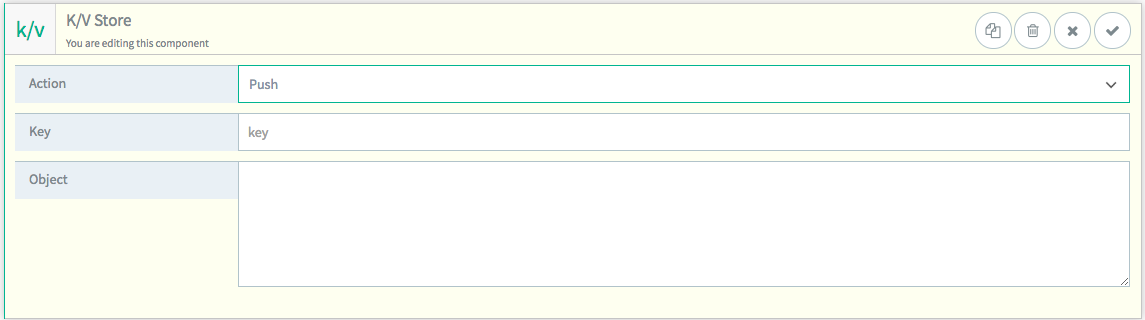

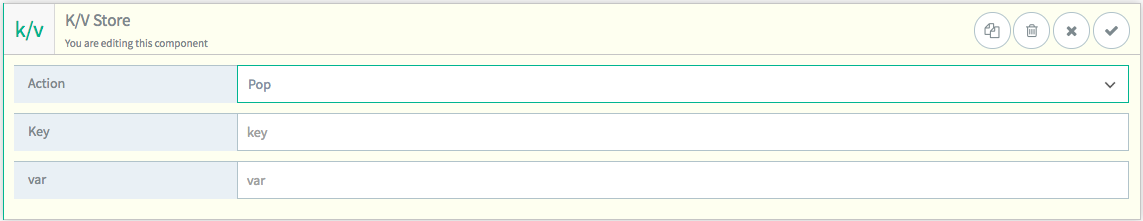

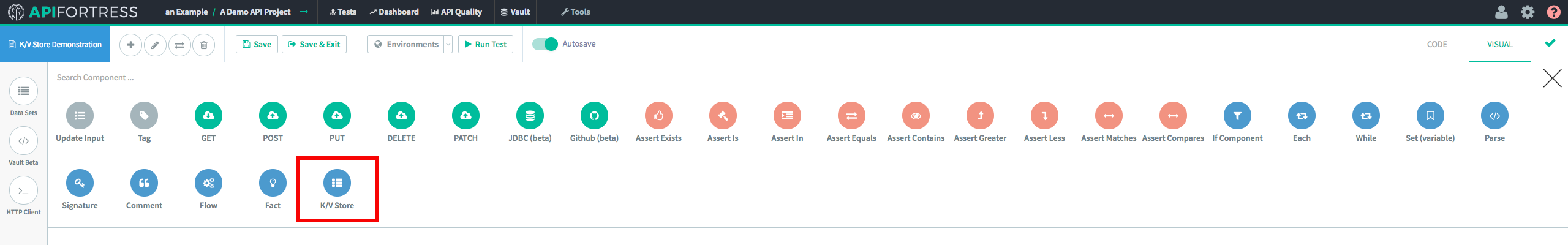

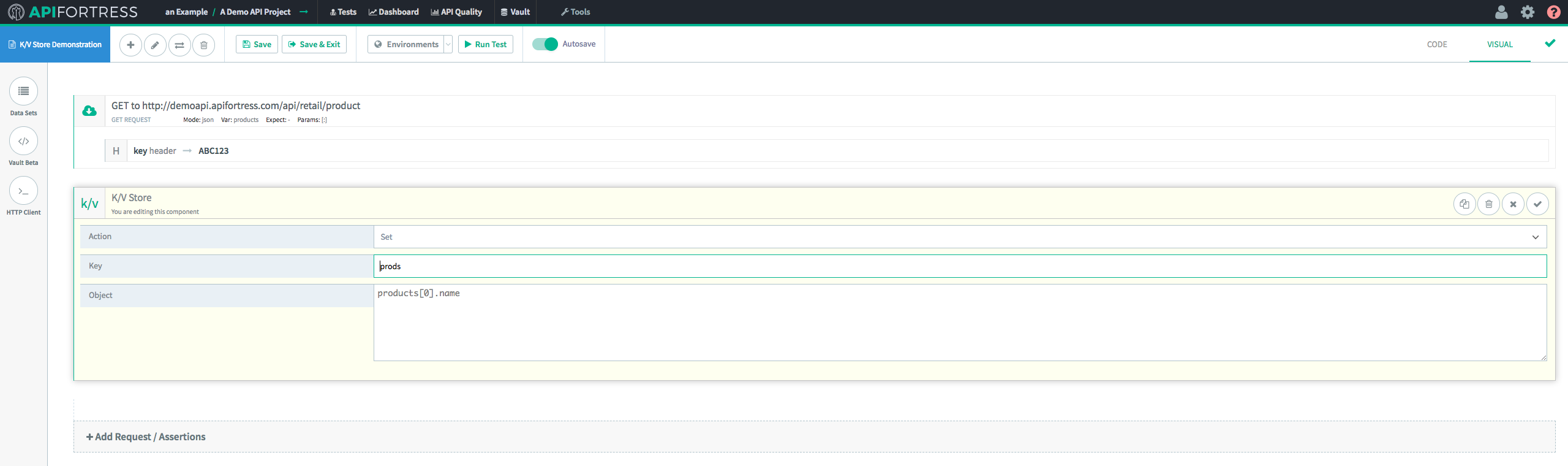

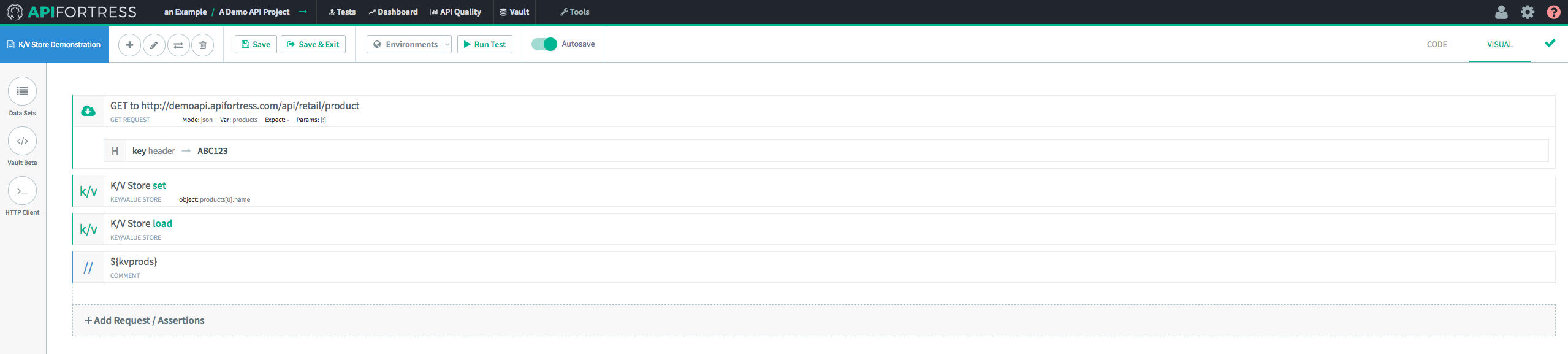

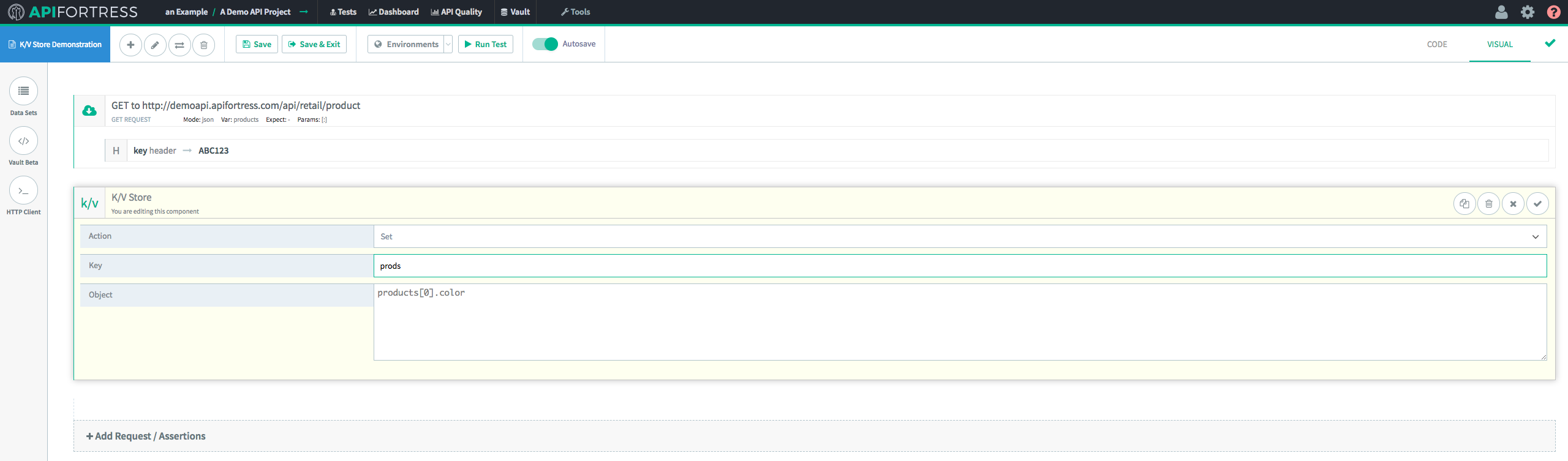

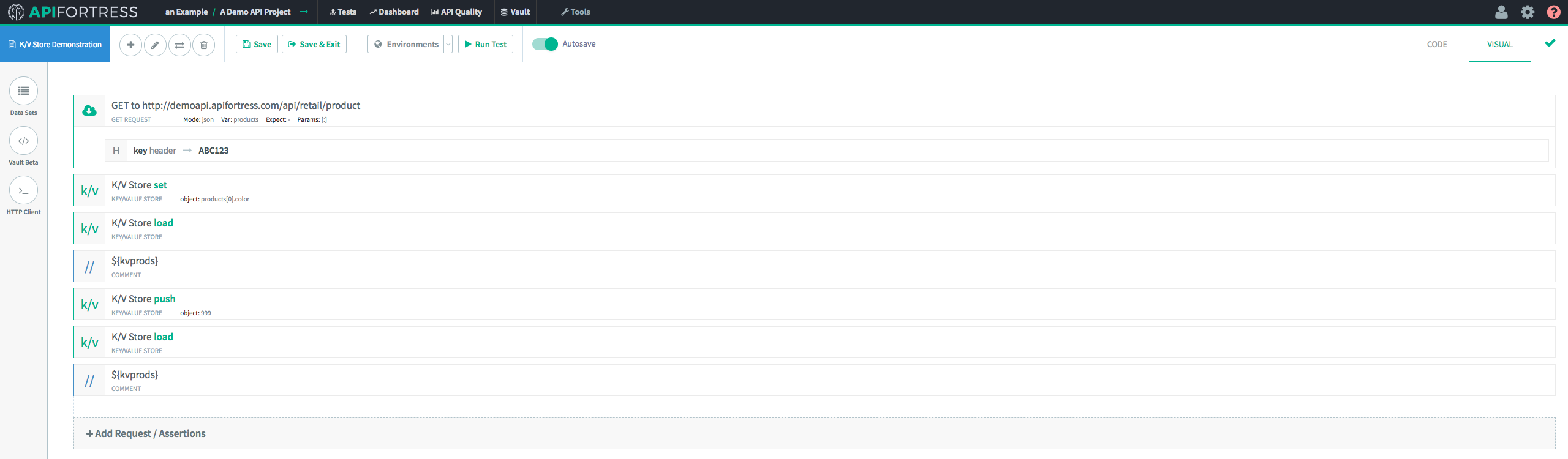

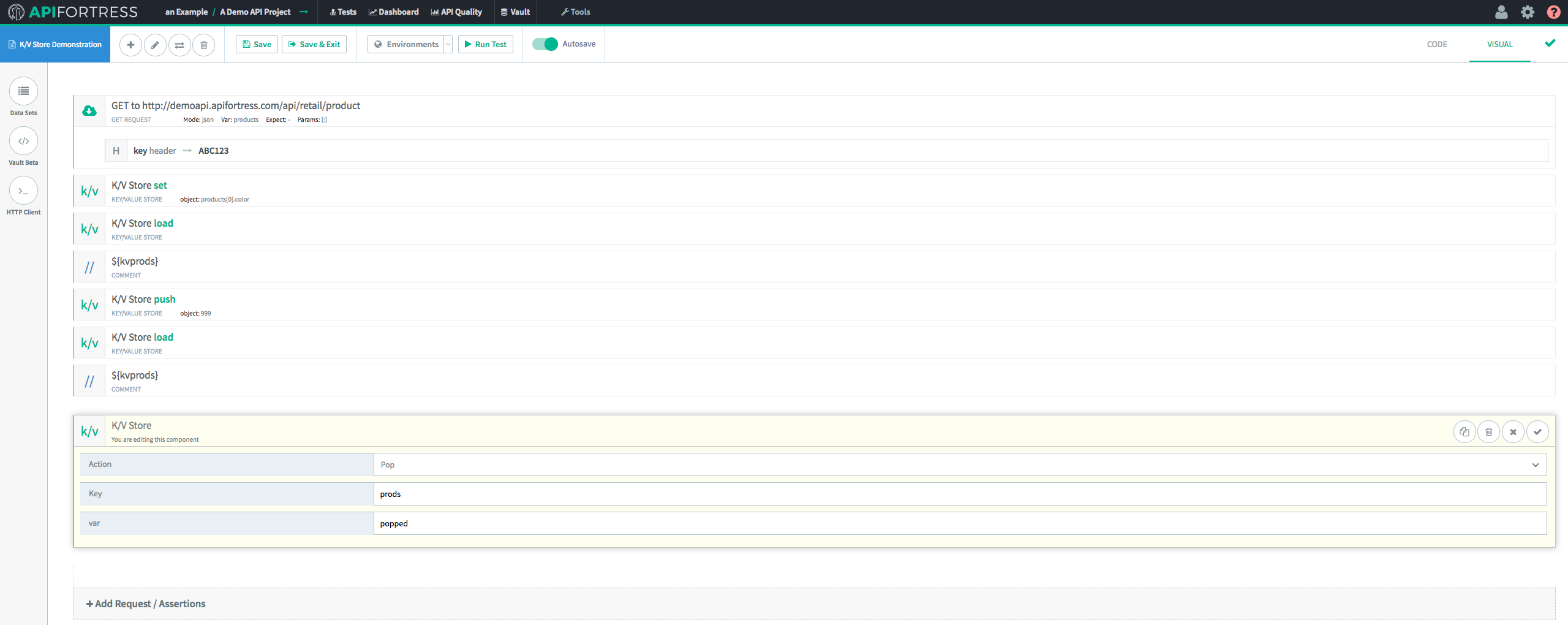

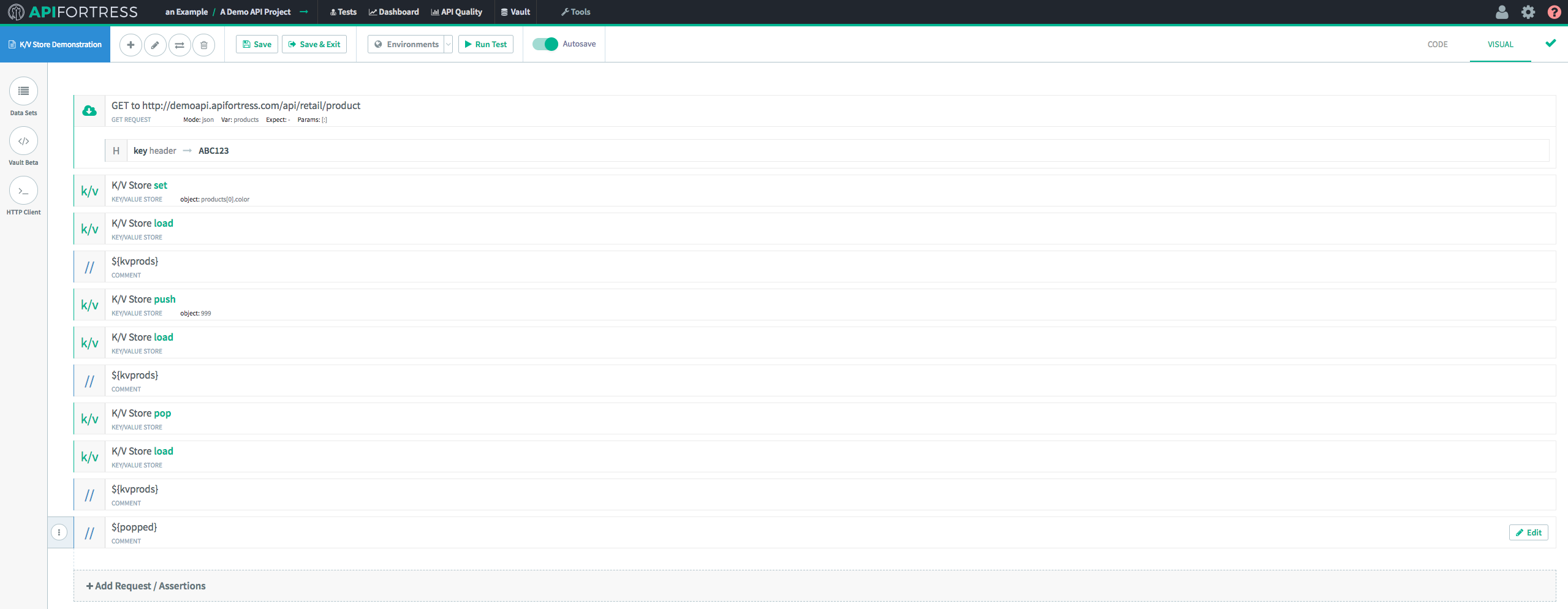

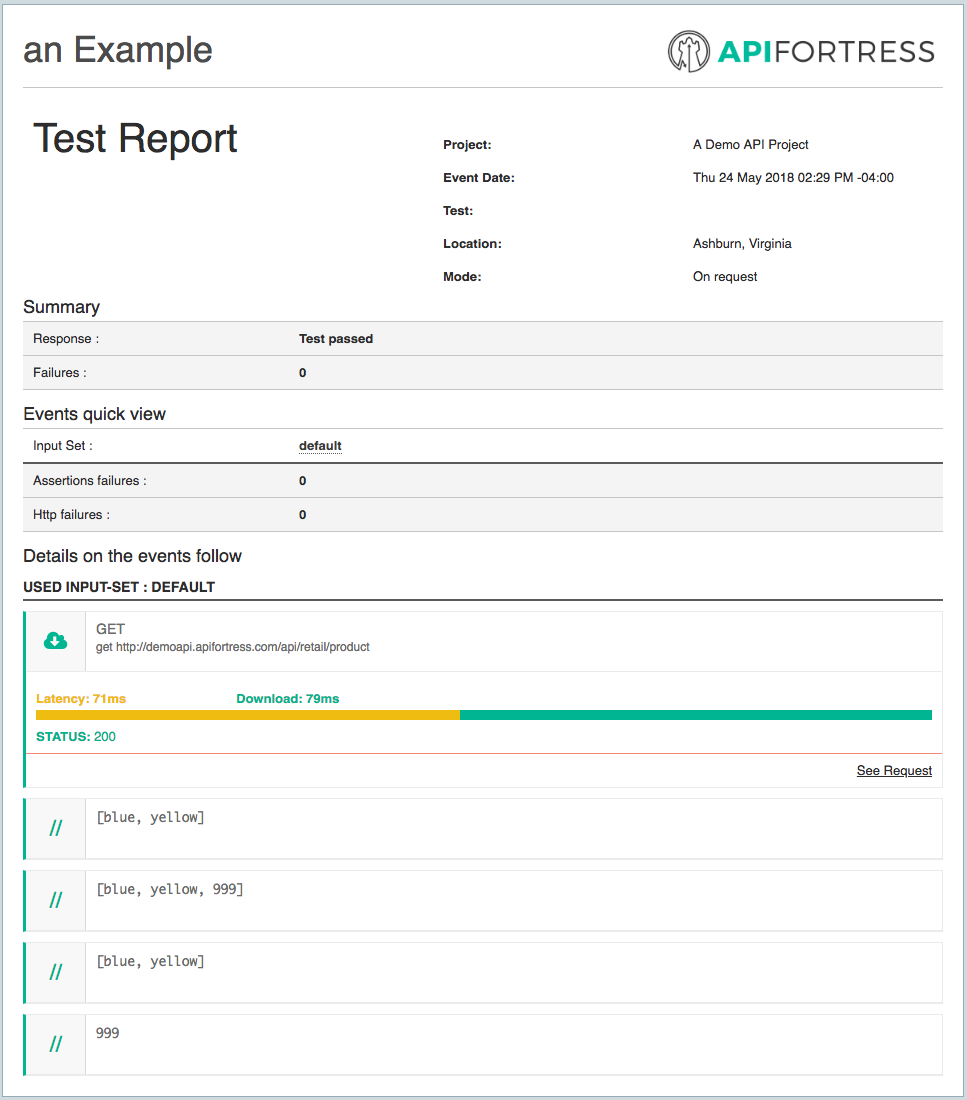

The Key/Value Store Component has 4 methods available for use. They are:

An extremely important point to note is that these key/value pairs are temporary. They expire after 24 hours has elapsed since the last update to the value itself.

The Key/Value Store Component has 4 methods available for use. They are:

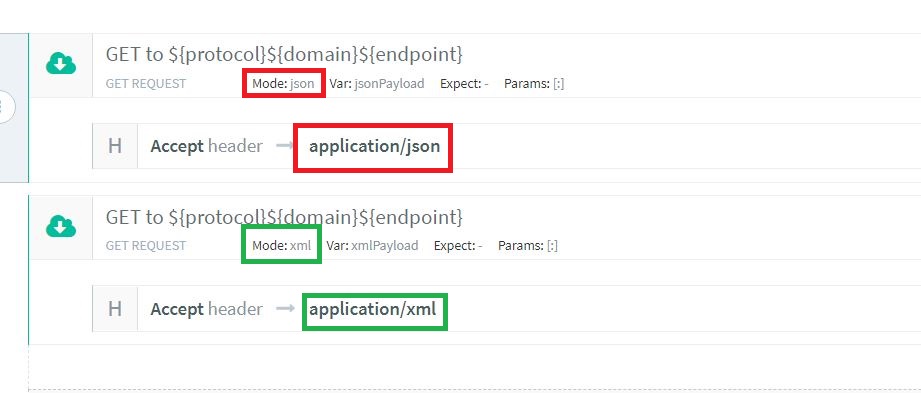

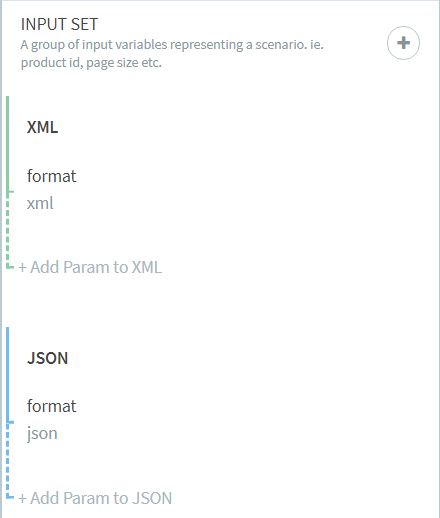

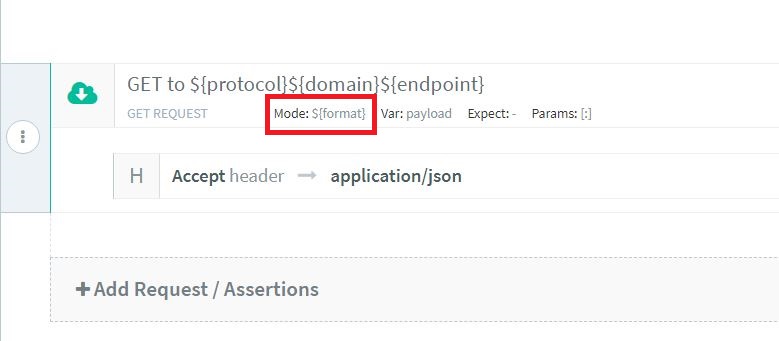

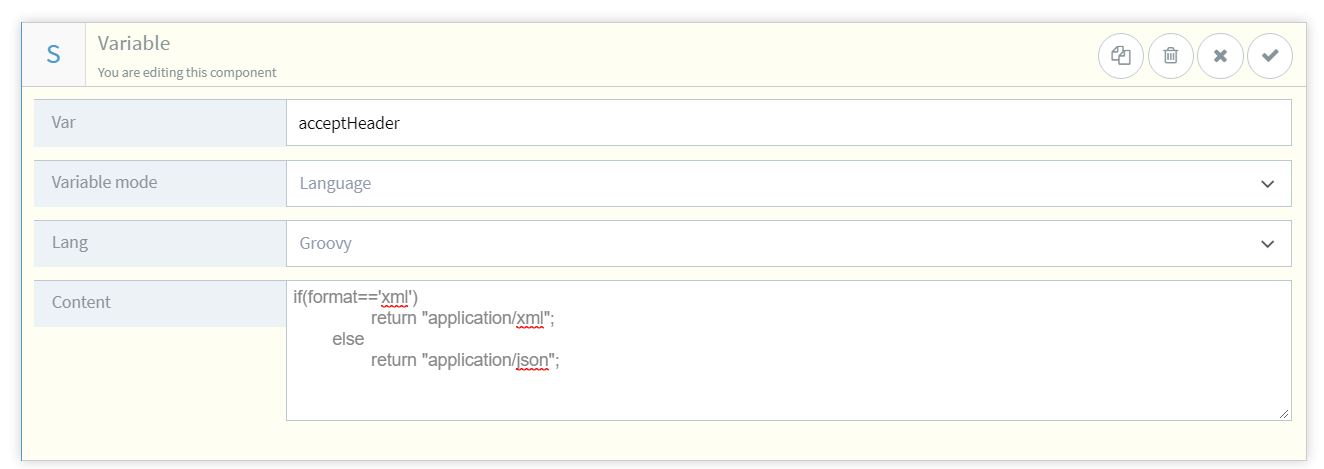

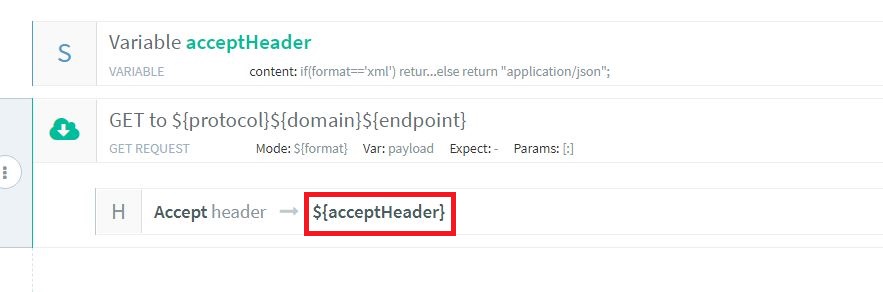

Now, the test will be executed two times; once for ‘XML’ and once for ‘JSON’, ensuring that the header will have the correct value.

Now, the test will be executed two times; once for ‘XML’ and once for ‘JSON’, ensuring that the header will have the correct value.